An Illustrated Guide to Context Engineering, Prompt Engineering, and The Future of Both

What I've learned about context engineering as an AI PM. Plus the Skill and the prompt I use to pressure-test it.

TL;DR: Context engineering is a systems design discipline that separates working AI from failing AI. It replaces prompt engineering as a core skill for product and workflow builders in 2026. The four canonical strategies are Write, Select, Compress, and Isolate (LangChain framework). Memory architecture splits into episodic, semantic, and procedural layers. Stanford’s ACE framework enables self-improving agents. For PMs and builders, this means owning context architecture as a product decision, not delegating it to engineering.

Last week, I came across a job post that asked for context engineering skills, but the responsibilities clearly described prompt engineering.

These are not synonyms.

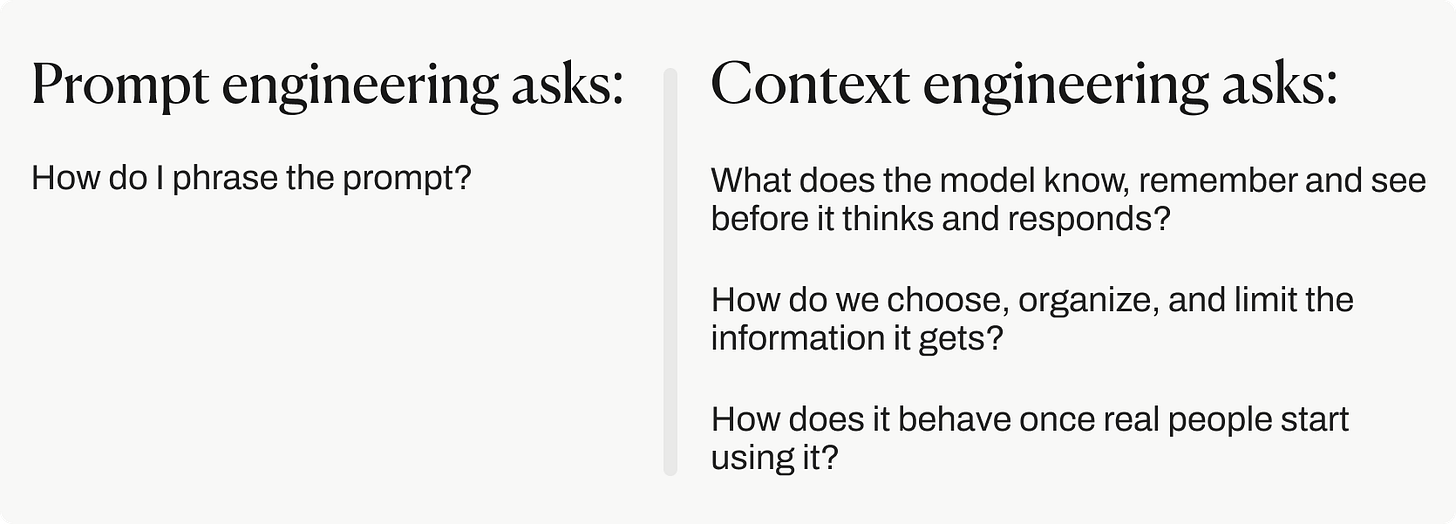

Prompt engineering is deciding what and how to ask the model.

Context engineering is deciding what the model knows when it answers.

One starts a conversation. The other shapes the information ecosystem in which that conversation happens, so AI can complete tasks reliably.

Context engineering is not a better version of prompt engineering. It’s the system around the prompt.

Right now, as everyone offloads work to agents, our job is shifting. We’re learning to do two things extremely well: give the agent the right context, and judge the output. That's the new work.

This post is everything I’ve learned about context engineering as an AI PM and product builder. If someone had shared this level of detail with me when I was starting, I would have saved a lot of hours, credits, and headaches.

Hey, I’m Karo Zieminski 🤗

AI Product Manager and builder.

I write Product with Attitude, an AI newsletter community of 18K subscribers learning to build with AI and developing critical AI literacy through practice. The kind where you sit down on a Saturday morning, follow a guide, and walk away with a working agent, automation, or product. Built by you. Understood by you. Owned by you.

If you’re new here, welcome! Here’s what you might have missed:

→ The Only AI Prompting Guide That Works On Reasoning Models (And Our Cognition)

→ Claude Design Review: 48-Hour Builder’s Test + Hero Prompts

Join 18K readers from around the world and learn with us.

What’s inside

Why prompt engineering is no longer enough

Why bigger context windows do not solve bad context

The 4 strategies every reliable AI system needs

The 6 signs your context window is getting dirty right now

Why PMs should own context architecture as a product decision

How Stanford’s ACE framework points toward self-improving agents

A context window hygiene Skill you can copy

A context architecture prompt you can copy

Part 1

Context Engineering vs Prompt Engineering

The Tweets That Turned Context Engineering Into a Discipline

The term crystallized in June 2025, when Shopify CEO Tobi Lütke posted on X:

I really like the term ‘context engineering’ over prompt engineering. It describes the core skill better: the art of providing all the context for the task to be plausibly solvable by the LLM.

Andrej Karpathy agreed:

[…] the delicate art and science of filling the context window with just the right information for the next step.

That tweet went viral. The mental model they introduced is now canonical.

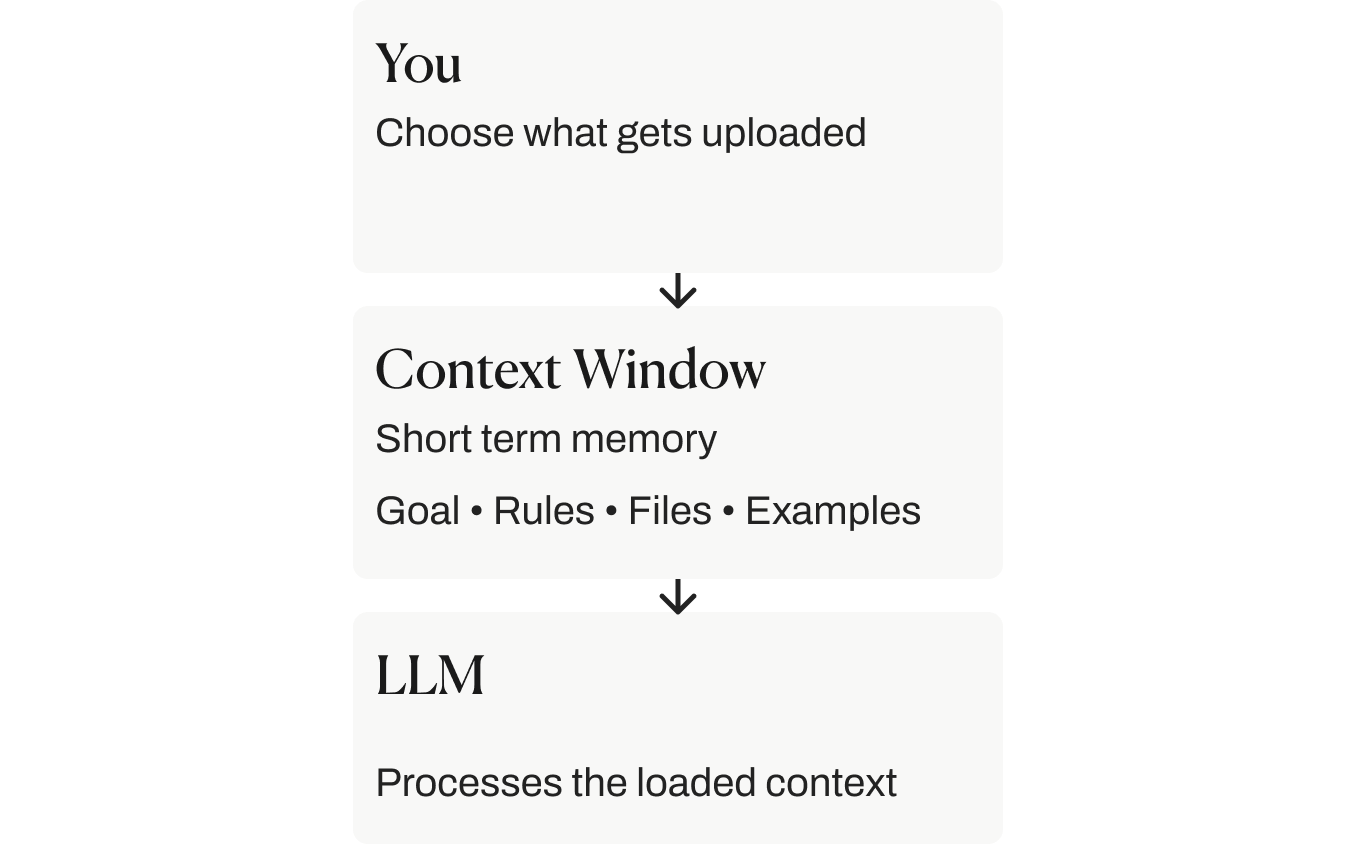

The LLM is the CPU: the brain.

The context window is RAM: the short-term memory.

We are the operating system: responsible for what gets loaded into that memory.

Our job is to load exactly the right data into working memory for each step. Too little context, and the AI guesses. Too much irrelevant context, and the AI gets confused.

The skill is knowing what to include, what to leave out, and when to update it.

Context Engineering vs. Prompt Engineering

As language models graduated from simple instruction followers to full-blown reasoning engines and agentic coworkers, the way we work with them had to evolve too.

Prompt engineering, the skill everyone obsessed over early on, is now just one part of context engineering. Understanding the distinction determines whether we build temporary demos or systems.

Context Engineering vs. Prompt Engineering:

A Comparison Table

Context Engineering vs. Prompt Engineering:

When to Use Which

Most confusion around prompt vs. context engineering comes down to when to use each.

Prompt engineering is fast, lightweight, and works well for one-off tasks.

Context engineering takes more setup (and maintenance!), but it’s what makes systems reliable over time.

This table shows which one to use based on the situation we’re in.

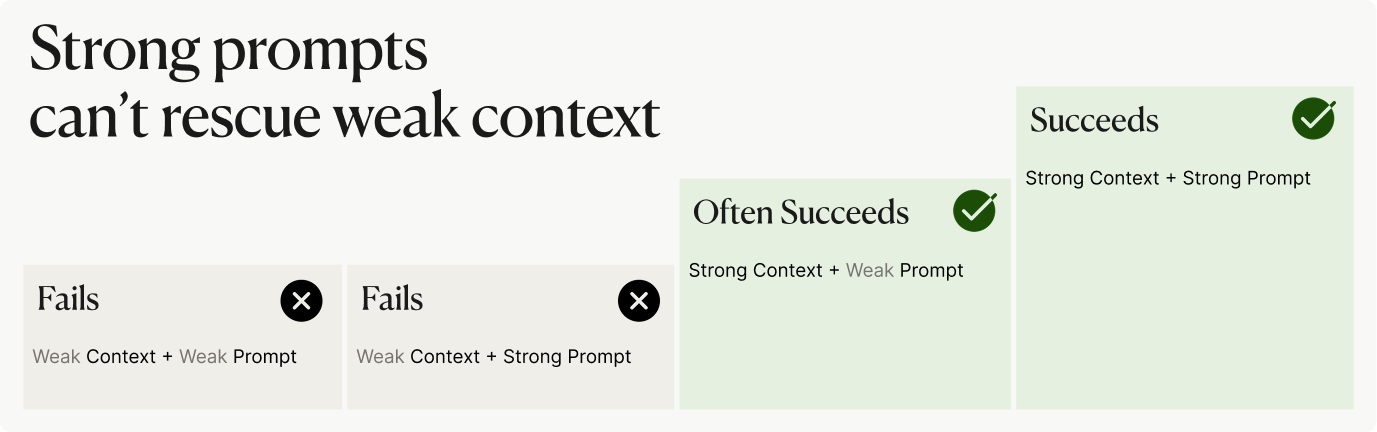

Weak Context Kills Strong Prompts

Gartner predicts 40% of enterprise applications will use task-specific AI agents by late 2026. Every one of those agents lives or dies by how well its context is engineered.

A well-crafted prompt in a poorly engineered context still fails. A poorly crafted prompt in a well-engineered context often succeeds.

That asymmetry is the argument for treating context as the underlying system.

Context Engineering vs. Context Window

The context window is the model’s attention span.

Context engineering is deciding what goes into that attention span, and when.

The context window can only hold so much at once.

Like me trying to cook dinner while my husband is talking about economics, my son is complaining that he’s hungry and the oven starts beeping.

Technically, all the information is there. Emotionally, everyone is in danger. AI works in a similar way.

If you throw everything into the context window, it gets messy fast.

If you choose well, the model can do something useful with it.

The difference is the right information, at the right time, in a space that can handle it.

The context window is not unlimited storage. It is finite, expensive, and acts as the model’s working memory.

Bigger Context Windows Don’t Fix Bad Context

Frontier model marketing in 2026 loves token counts.

Gemini 3.1 Pro, Claude Opus 4.7, Claude Mythos Preview, and GPT 5.5 all offer 1M-token context windows.

A lot of people assume that it automatically means better AI performance.

It doesn’t.

A context window is not unlimited storage. It is finite, expensive, and acts as the model’s working memory, not permanent memory.

A bigger context window simply means the LLM can receive more information at once. It doesn’t mean the model will understand, prioritize, or use that information well.

Context Rot

The context pipeline is how context travels through the system: collected, ordered, updated, retrieved, compressed, and passed from one step to the next.

Context rot is what happens when that pipeline is poorly managed. It shows up when a session, agent, or workflow becomes overloaded with messy summaries, outdated details, repeated instructions, irrelevant files, conflicting decisions, too many tool options, and buried requirements.

Even if the right information is somewhere in the input, the system becomes less reliable when that information is surrounded by clutter.

Like me looking for the can opener while my husband debates something I didn’t ask about.

The can opener may exist.

That doesn’t mean anyone is getting dinner.

Context Structure

A useful way to understand this comes from Stanford’s “Lost in the Middle” paper.

The researchers found that AI models often pay more attention to information at the beginning and the end of a long input. They struggle more when the important detail sits somewhere in the middle.

The beginning sets the instructions.

The end is closest to the generated answer.

The middle has to fight for attention.

Even if the right answer is there, it’s easier for the model to miss it. This is especially true for long context windows.

Having the right information in the context is not enough.

The structure of that information matters.

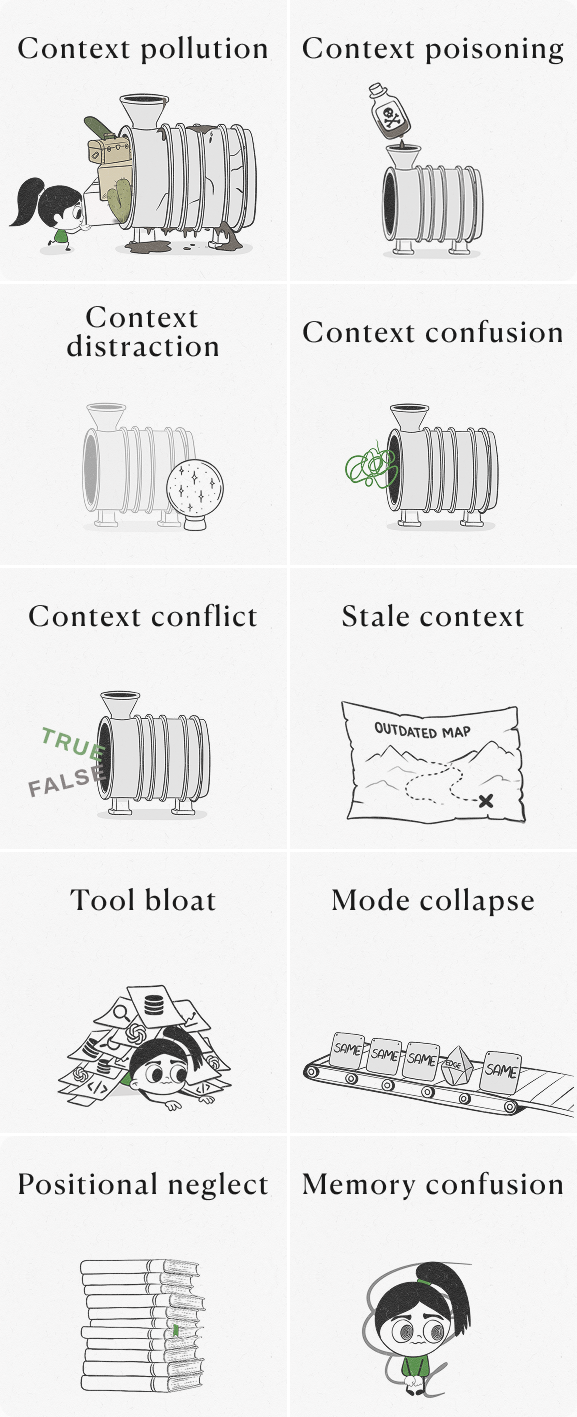

10 Reasons Context Breaks in Real Systems

These are the common ways context breaks in real systems.

Share this with 3 friends or colleagues and you’ll get a free month of premium membership.

1. Context pollution

This happens when we include too much information: full documentation libraries, hundreds of chat turns, old notes, unused examples, irrelevant tool descriptions. The system can’t focus. Important details get buried, and answers become less relevant or inconsistent

2. Context poisoning

This happens when bad or malicious info gets written into memory and persists.

3. Context distraction

This happens when irrelevant but salient info pulls attention.

4. Context confusion

This happens when overlapping or ambiguous info muddies reasoning.

5. Context conflict

This happens when two sources contradict.

6. Stale context

This happens when pre-loaded context is outdated.

The system gives answers based on old or incorrect assumptions, even when better information is available.

7. Tool bloat

Tool bloat breaks agents.

Every registered tool consumes tokens and attention.

This happens when we register every possible tool or action “just in case.” Every tool consumes attention. Every option creates more routing work.

A model with too many tools can struggle to choose the right one, call the wrong one, or invent one that does not exist.

8. Mode collapse

This happens when we keep doing the same thing every time, even when the situation changes and the model gets stuck in a pattern.

The system gives similar answers every time, starts missing exceptions, and quietly fails on unusual cases, and fails silently on edge cases.

9. Positional neglect

This happens when critical information is buried in the middle of a long context. The model may have access to it, but access is not the same as reliable use.

10. Memory confusion

This happens when different kinds of memory are mixed together. Our long-term preferences, yesterday’s failed tool call, and a permanent product rule should not live in the same undifferentiated blob.

They age differently. They fail differently. They should be managed differently.

6 Signs Your Context Window Is Messy Right Now

If any of these are happening, stop and clean up the context before you keep building. That’s exactly what the Context Window Hygiene Skill is for.

1. The agent repeats itself.

2. The agent forgets an earlier decision.

3. The agent invents a tool, file, or function.

4. The AI Asks What You Are Working On

5. Output quality drops mid-session.

6. Each Response Gets More Expensive

The Four Strategies That Make Context Engineering Work (LangChain Framework)

Don’t worry if you’re not familiar with this framework. I’ll walk you through the parts that matter. I’m also sharing a Skill I built from it, which I use to periodically review my own context engineering setup.

LangChain, a developer toolkit for building applications with LLMs, offers a simple way to understand context management: AI systems work better when they build understanding step by step and keep only the most useful information in the model’s working memory.

Anthropic’s guide reinforces this. Systems should build up understanding gradually, layer by layer, and only keep what is necessary in working memory.

These four strategies are what reliable AI systems are built on.

1. Write: save context outside the window

Write means storing important information somewhere stable, outside the model’s limited working memory.

That could be a file, a note, a database, or a project instruction document like CLAUDE.md or AGENTS.md, that are the standard pattern for project-scoped persistent memory. Think of it as the agent’s permanent reference document.

This context stays protected.

It doesn’t get summarized, trimmed or distorted.

If you’re new to rules files, this article is a great place to start:

2. Select: retrieve only what is needed

Select means bringing in only the information the model needs right now.

This is the broader idea behind RAG: the system searches for the most relevant information first.

That search can happen through keyword search, meaning-based search, similarity matching, or a mix of methods.

The best systems don’t stop at finding possible matches. They rerank the results before adding them into context.

The goal is to load only what is useful for the next step, not to load everything that might be useful.

3. Compress: retain only required tokens

Compress means reducing the amount of context while keeping the important parts.

As a task gets longer, the model’s working memory fills up. At some point, the system has to summarize, shorten, or reorganize what came before.

Claude Code does this with “auto-compact”, which summarizes the conversation when the context window exceeds 95%.

For long-running AI agents, this is essential. It’s what makes agents viable for tasks that span hundreds of turns.

No agent, no matter how clever, can carry every detail forever. Try it and you’ll watch a good $200 agent slowly turn into a $2,000 agent that’s worse at its job.

The goal is to preserve what the model still needs to do the job well, not to remember everything.

4. Isolate: split context across agents

Isolate means separating context by role, so each AI agent only sees the information it needs for its specific task.

A customer support agent may need a customer’s account history, past messages, and open tickets.

It doesn’t need product documentation for unrelated competitor products.

When every agent sees everything, irrelevant information can leak into its reasoning and confuse the answer. Role-based context keeps each agent focused.

The goal is to give each specialized agent the right information for its job and keep the rest out: a scoped window tuned to its function.

The Context Window Hygiene Skill

Use it when:

The agent starts repeating itself.

The agent forgets an earlier decision.

The agent invents a tool, file, or function.

The AI suddenly asks what you’re working on.

Output quality drops mid-session.

Or each response gets more expensive.

What comes out:

A handoff note that preserves the decisions.

A persistent

CLAUDE.mdormemory/entry for anything that should outlive the session.A scoped subagent prompt instead of one mega-agent juggling everything.

A live artifact for views you’ll re-open later.

Sometimes a scheduled task for work that can wait.

Copy the Context Window Hygiene Skill.

The Context Spec Generator Prompt

This prompt turns Claude into a context engineering architect that audits the information design of an AI agent before it’s built.

You describe what you’re building, and it returns a ranked list of the five highest-stakes context items, decisions about where each one lives (system prompt, episodic memory, semantic store, or runtime retrieval), freshness requirements, failure modes, observable signals for production, fallbacks for retrieval failures, an eviction order for compression, and one risk you didn’t think to ask about.

It treats context as a product with SLAs rather than as prompt-stuffing, and forces specificity by gating on constraints (model, window size, latency, session length) before answering.

Copy the Context Spec Generator Prompt

Part 2

Context Engineering For PMs

and Builders

This is the part many PMs don’t realize is theirs to own.

Context engineering is not purely an engineering discipline. It sits at the intersection of product strategy, user understanding, and system design. Engineers can build the retrieval infrastructure. They can’t decide what the model should know about the user, when freshness matters, or what should age out.

That’s product work.

The 5-Factor Context Principles Every AI Builder Should Know

The 5-Factor Agents framework is a way of thinking about how to build agents that work long-term. It’s adapted from Heroku’s 12-Factor App that provides engineering-grade principles for production AI systems.

These are the context principles that matter most:

1. Curate, Don’t Accumulate

Don’t keep adding everything to the chat forever and don’t assume the AI will automatically know which parts of a long conversation matter most. Just-in-time retrieval outperforms pre-loaded context for most tasks.

Simple prompt:

Here is the current context:

[the goal], [the current state], [the rules], [the decisions], [what to ignore],

Use only this as the source of truth. Ignore earlier conflicting instructions2. Compact Errors

When something goes wrong, don’t paste the entire mess back into the AI. Long error logs, repeated failures, and messy notes confuse the model. A short summary beats a full dump.Give the AI a compact failure note:

What failed:

What I expected:

What actually happened:

What we already tried:

What I want next:3. Pre-Fetch Context

A good brief at the start saves time, tokens, and frustration. Give the AI the important information before it starts working, not halfway through.

If you keep adding missing details later, the agent may have to revise its plan, undo work, or make assumptions.

4. Keep Context Below Capacity

Keep context under 40% capacity. Don’t wait until the session is overloaded. Headroom is not waste. It’s working space.

5. Use Small, Focused Agents

Sub-agents isolate context across roles. One agent per scoped task beats one mega-agent doing everything.

The PM’s Role in Context Engineering

Here’s the role division I work with on cross-functional teams:

PMs define what goes in each context layer. Engineers build the infrastructure to fetch and store it.

PMs design the orchestration logic, what the model sees when. Engineers implement the orchestration engine that executes it.

PMs decide what ages out, what persists, and what gets re-fetched. Engineers build the pruning rules.

PMs own context as a product surface. Engineers own it as a system surface.

If the PM isn’t doing this, one of two things happens. Either an engineer makes the product decision by default, or nobody makes it and the agent gets every available signal dumped into the window.

The PM’s core translation task looks like this:

“Users want better suggestions” becomes: the system needs access to past rejections, current workspace state, and team preferences.

“Users want more personalization” becomes: capture the user’s writing style, common corrections, and role-specific patterns.

“The agent should feel like it knows me” becomes: define which signals persist across sessions, which expire, and which the user should be able to correct.

The actionable principle: treat context as a product. Iterate it. Prune it. Measure it.

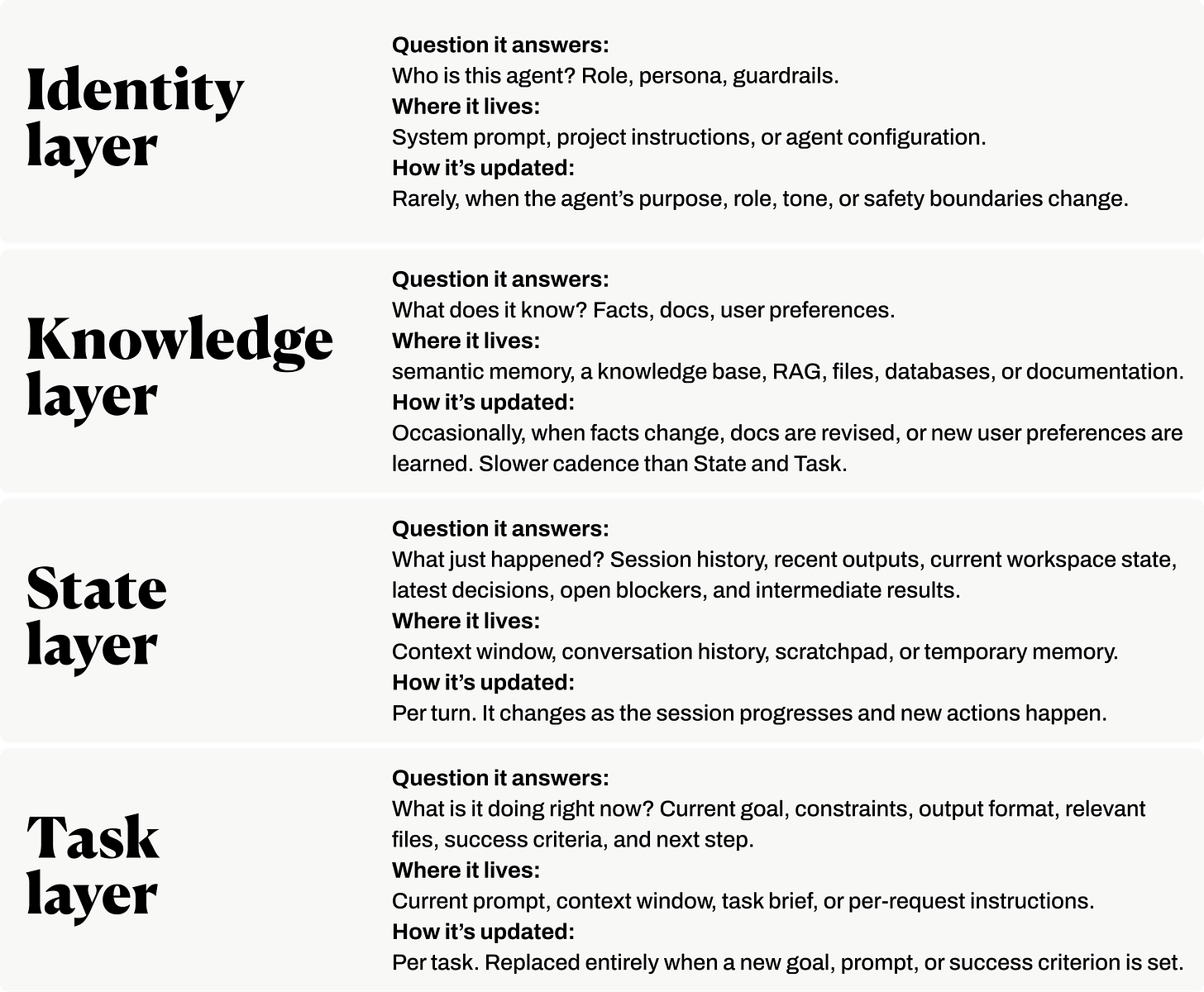

The Context Pyramid: a PM Framework

This four-level context hierarchy framework is inspired by OpenAI PM Miqdad Jaffer and Productboard:

Use the context pyramid as a sorting tool before you build or prompt an AI system. That separation makes agents easier to debug, easier to update, and less likely to rot under their own context.

Part 3

The Future Of Context Engineering and Prompt Engineering

Stanford ACE: The Self-Improving Context Layer

Most writing about context engineering still treats context as something you design once.

But the research frontier is moving toward dynamic context: context that changes, improves, and learns from experience.

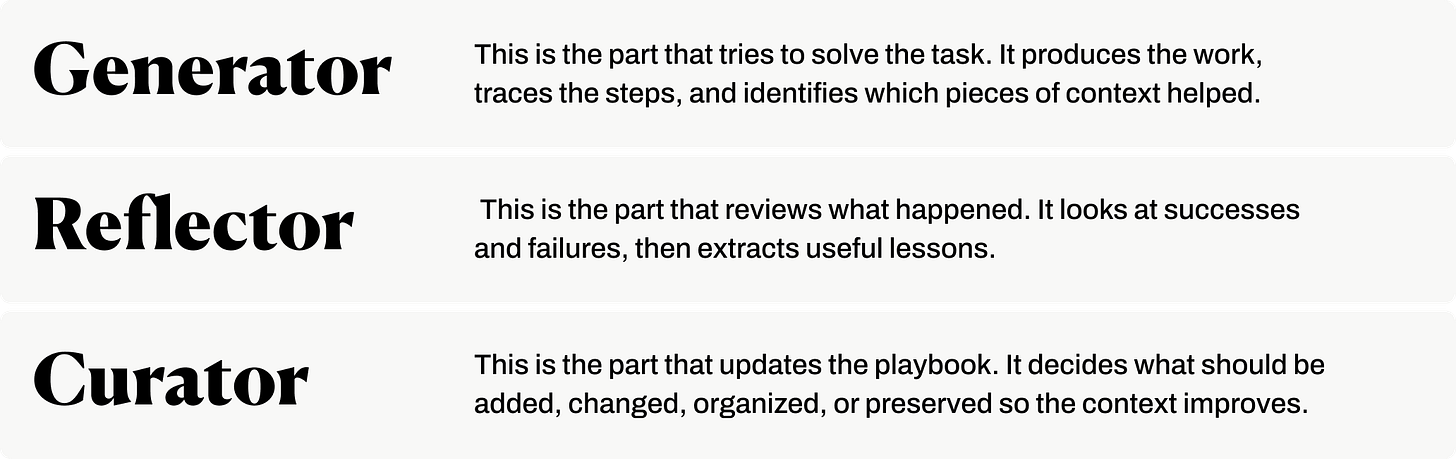

In October 2025, researchers from Stanford University, SambaNova Systems, and UC Berkeley introduced Agentic Context Engineering, or ACE.

The core idea is simple but important: instead of treating context as a fixed prompt, ACE treats it as an evolving playbook the agent can update over time.

Every time an ACE-style agent works on a task, it can notice what helped, what failed, and what should be remembered for next time.

The ACE architecture has three components:

The important shift is that ACE doesn’t improve the model by retraining it.

It improves the environment around the model.

Instead of changing the model’s weights, it updates the instructions, strategies, examples, and lessons the model sees before it works.

The paper reports that ACE improved agent performance by 10.6% on agent benchmarks and 8.6% on finance tasks, while also reducing adaptation cost and latency.

What I’m watching for in 2026

Prompt engineering on the system side

The best AI products won’t ask users to bring perfect prompt and context. They’ll build both layers into the product itself.

You can see this with Perplexity for Finance. Common use cases are already anticipated: stock research, filings, earnings recaps, valuation lookups. Both prompts and context are built into the workflow.

Prompt engineering on the user side

When I built my Palantir dashboard in Perplexity last week, I didn’t prompt at all. I gave it a company name. The agent routed through financial data, filings, transcripts, estimates, market context, and a citation layer.

For common use cases, users won’t write elaborate mega-prompts. Model providers will wrap those tasks in workflows and UX layers.

Context engineering as a product layer

I expect systems that manage their own context: selecting what matters, pruning what expires, carrying forward what should survive the session. For builders, this means we won’t only decide what to feed the model right now. We’ll design how the system learns what to keep next time.

Context becomes an evolving map, not a static brief.

What This Means For You

Context engineering is the systems design discipline that determines whether an AI product ships reliably or collapses under real users.

For product builders, this means owning the context architecture as a product decision. Define what the model needs to know. Decide when it needs to know it. Design how stale information gets pruned.

If you’re a PM and your engineering team is making context decisions without you, that’s not delegation. That’s abdication. Get in the room.

If you’ve been designing context architectures for your AI products and found patterns that work, or patterns that failed spectacularly, drop them in the comments.

WHY SUBSCRIBE ・YOUR BENEFITS・ TOOLS I BUILT・CLAUDE HUB・PERPLEXITY HUB ・VIBE CODING HUB

A clear guide to prompt and context engineering and an important reminder that they are not the same thing when building AI products. Context isn’t just an input; in many cases it *is* the product: the business logic, differentiation, and core value. PMs should absolutely be part of these conversations too, not just engineering teams. Thanks for sharing this, Karo!

Great stuff!!! I loved the side-by-side comparison between the two!