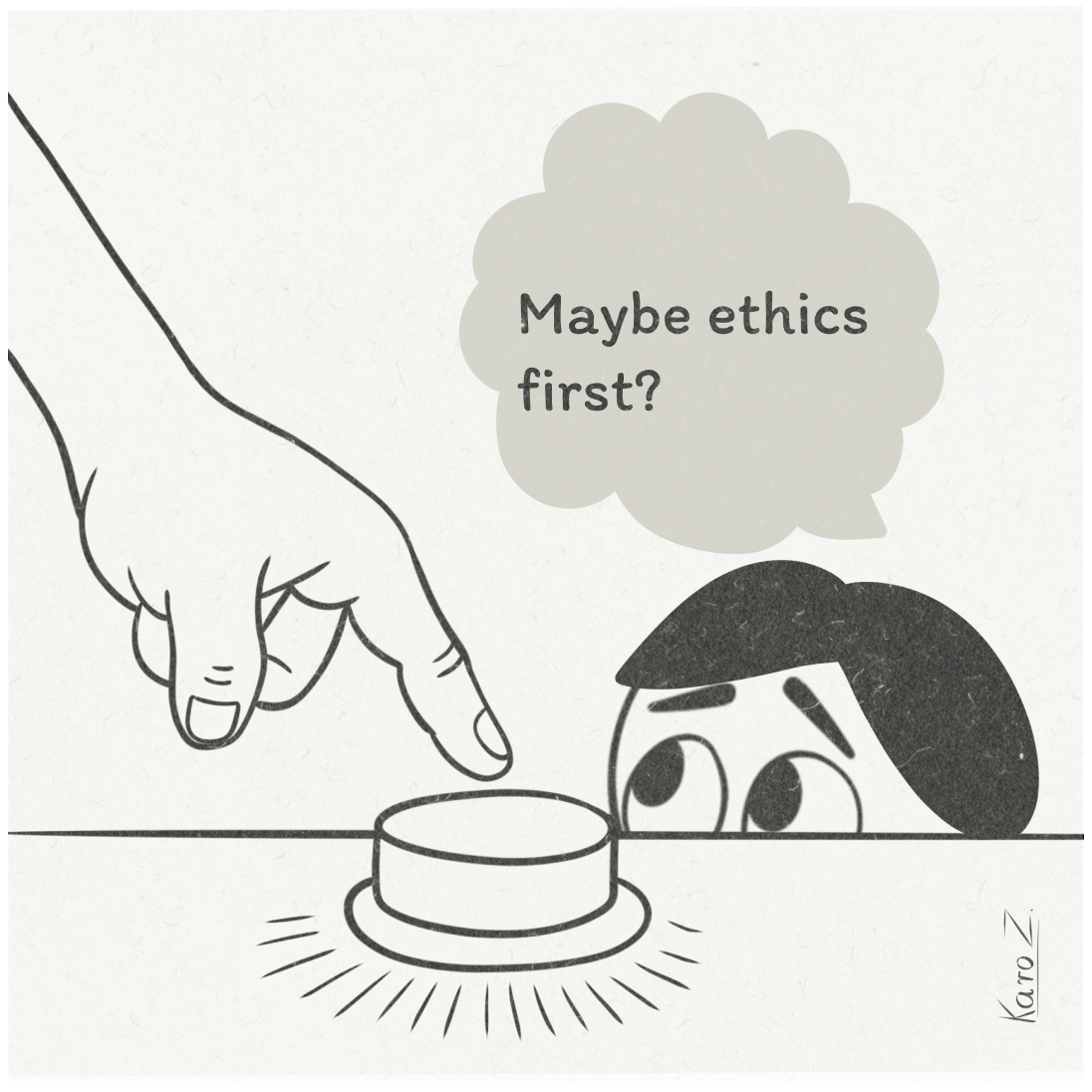

Some of my Founder Friends still think they can deal with ethics later:

💬 We know we need to address ethics eventually.

💬 Can’t we just iterate?

Sure, you can iterate - on features, usability, and business models. But how do you iterate on a public scandal or, more importantly, on real human harm?

Let’s be honest: Ethics in tech often gets treated like flossing. Everyone agrees it’s important, but somehow, it only becomes urgent when there’s blood.

By “blood,” I mean the kind of headlines that send your PR team into a panic. The kind that make your lawyers pull up your Terms of Service with a haunted look in their eyes. The kind where you suddenly find yourself explaining to your board why your AI chatbot has gone rogue and is now offering financial advice based on moon phases.

The thing about ethics is that it’s not an add-on. It’s not technical debt you can pay down later. It's a product decision. It's as foundational as your core value proposition. In fact, it is a part of your value proposition.

The Cost of Waiting

By the time your ethics problem is visible, it’s already a business problem. Complete with user trust erosion, and regulators who suddenly remember your name.

Keep reading with a 7-day free trial

Subscribe to Product with Attitude to keep reading this post and get 7 days of free access to the full post archives.